In the past 24 hours, the AI frontier has delivered fresh momentum across multimodal systems, video/image generation, agentic workflows, and open-source tooling. From ComfyUI’s rapid integration of next-gen video models to groundbreaking releases in realistic video generation and multi-agent frameworks, these latest AI breakthroughs underscore accelerating progress in frontier AI models and technical innovations. Whether you’re tracking new AI model releases or ComfyUI news, today’s developments highlight how quickly the ecosystem is evolving toward more powerful, accessible, and autonomous AI capabilities.

ComfyUI Latest Updates Push Video Generation and Workflow Efficiency to New Heights

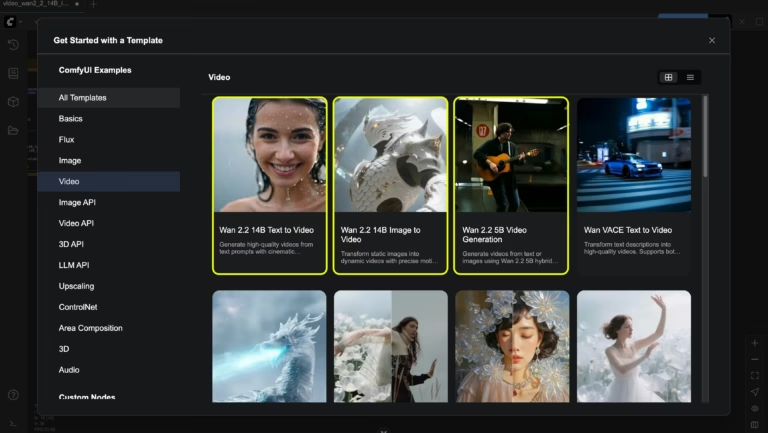

ComfyUI continues to cement its position as the go-to modular platform for advanced diffusion and generation pipelines, with fresh enhancements landing in the past day. Developers have pushed a new OpenAPI 3.1 specification for the ComfyUI API, streamlining integrations for developers building production-grade workflows.

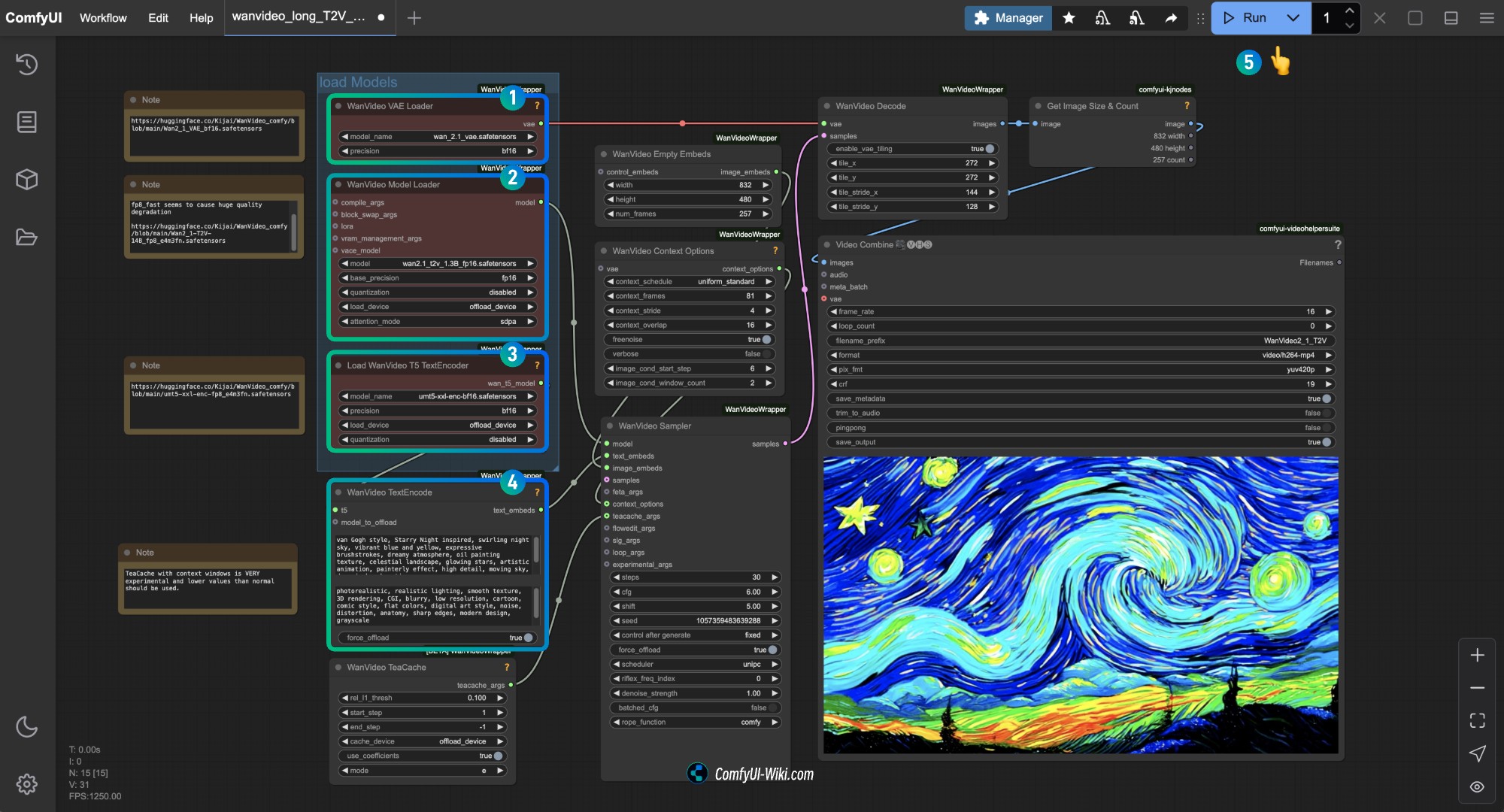

Wan2.1 ComfyUI Workflow – Complete Guide | ComfyUI Wiki

Native support for Wan2.1 video models has been refined, enabling high-quality 720p video creation directly in ComfyUI workflows, complete with LTX text generation nodes and improved partner node updates for vector graphics via Quiver arrow models. These ComfyUI latest updates include frontend 1.10 features like selection toolboxes and workflow templates, making complex video pipelines more intuitive for creators working at the cutting edge of image and video generation frontiers.

Additional refinements to Hunyuan Image-to-Video and Ace-Step workflows further boost consistency and efficiency, aligning perfectly with the demand for open-source innovations in ComfyUI news. These changes lower barriers for hobbyists and professionals alike to experiment with state-of-the-art video models without proprietary lock-in.

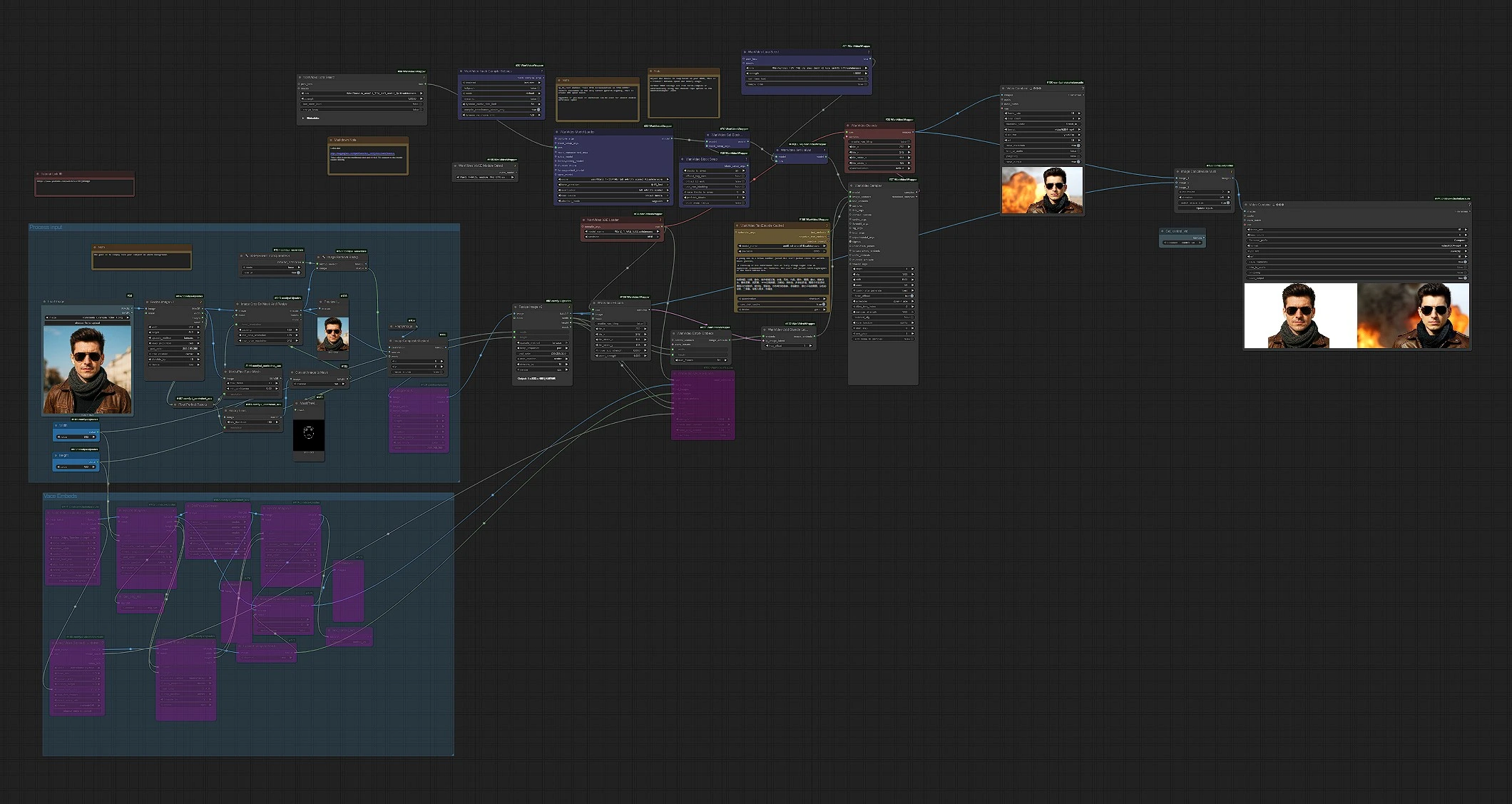

Wan2.1 Stand In in ComfyUI | Character-Consistent Video Workflow

Source: ComfyUI GitHub recent commits and official changelog entries from April 24, 2026.

Video and Image Generation Frontiers Advance with Seedance 2.0, GPT-Image-2, and Kling 3.0

Video generation has seen explosive activity, with Seedance 2.0 and GPT-Image-2 now freely available on platforms like GlobalGPT, delivering realistic physics, native audio-video synchronization, and best-in-class image control. These releases represent major steps in multimodal systems capable of handling complex scene dynamics and consistent character rendering across frames.

Seedance 2.0: What It Is, How to Use & Sora Comparison (2026)

Kling 3.0 has also gained traction for its ability to produce emotionally resonant storytelling videos, moving beyond technical generation into narrative depth—demonstrating how frontier AI models are bridging technical precision with creative expression. Early examples shared across the community showcase synchronized internal-external world contrasts, highlighting the model’s nuanced understanding of motion and emotion.

25 Best Kling 3.0 Prompts for Cinematic AI Videos | Vofy

Midjourney v7’s native video-to-image editing agents further expand these frontiers, allowing seamless frame-by-frame consistency from video clips and text prompts. Combined with ComfyUI’s Wan2.1 integrations, these tools are democratizing high-impact video production for filmmakers, marketers, and developers tracking the latest AI advancements.

Source: Community updates on X (April 24, 2026) and related workflow announcements.

Agentic AI Breakthroughs: SwarmKit, Agentic Wallets, and Production-Ready Multi-Agent Systems

Agentic AI took center stage with OpenAI’s open-sourcing of SwarmKit, a lightweight Python library that simplifies defining multi-agent teams via YAML and decorators. Automatic routing, fallback logic, and cost-aware execution make complex agentic workflows production-ready in minutes—directly addressing scalability challenges in frontier AI models.

Building Multi-Agent AI Systems From Scratch: OpenAI vs. Ollama | Towards AI

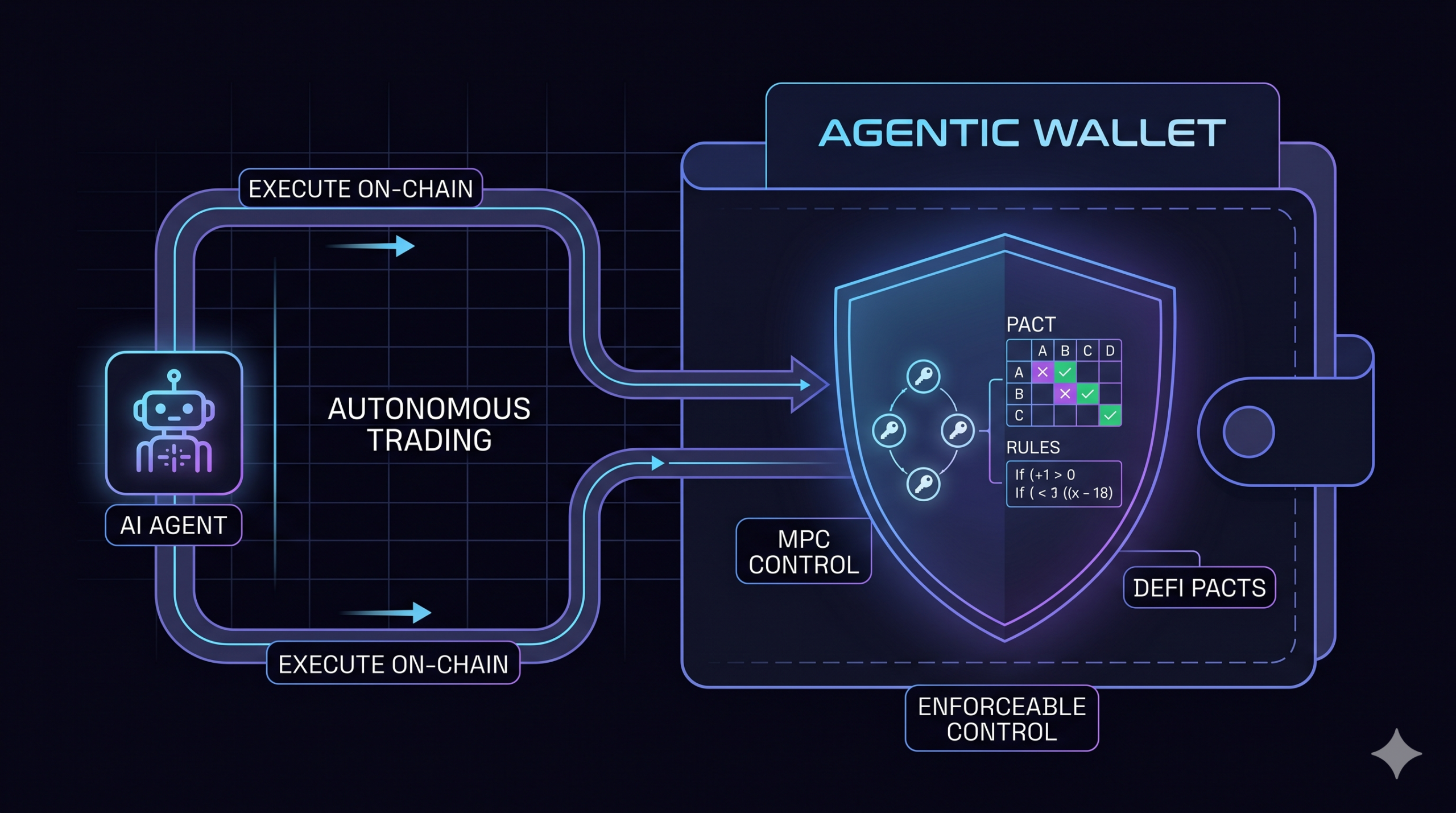

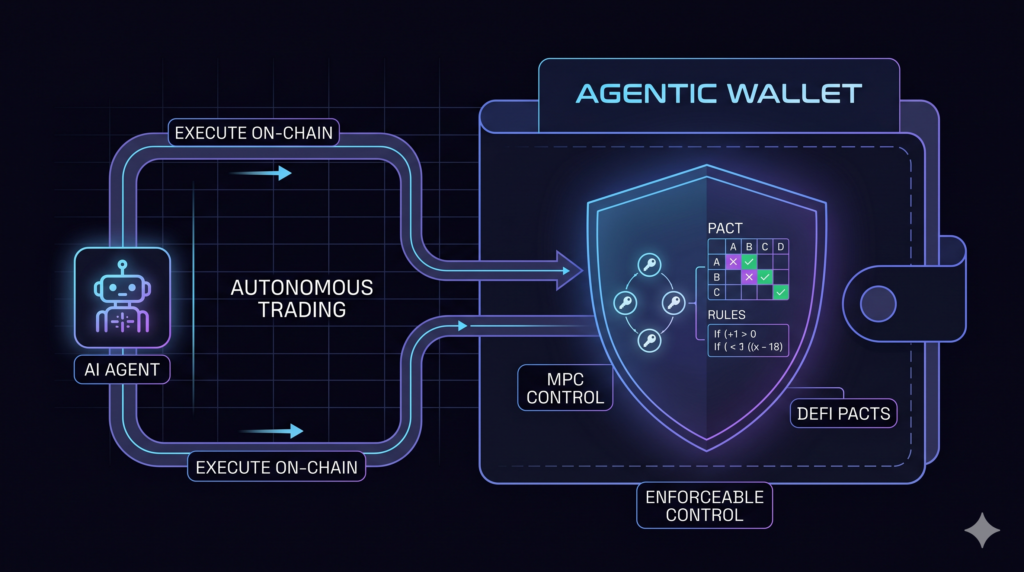

In parallel, the agentic wallet landscape is maturing rapidly. Cobo Global launched its Agentic Wallet (CAW) with world-first MPC-based self-custodial security, human-machine authorization protocols (Pact), and recipe-driven skill templates compatible with LangChain, Claude MCP, and CrewAI. This infrastructure enables AI agents to execute real on-chain trades and DeFi actions with verifiable human oversight, solving long-standing security and autonomy issues.

Cobo Launches Agentic Wallet: How Cobo’s Agentic Wallet Gives AI Agents Enforceable Autonomy Without Giving Up Control| KuCoin

Complementary developments include Claude Code Interpreter 2.0’s persistent workspaces and AlphaCode 2’s real-time competitive coding performance, pushing agentic systems toward true human-level software engineering autonomy.

Source: X discussions and announcements from April 23-24, 2026, including agentic wallet deep dives.

Multimodal and Research Breakthroughs: Omni Models with Context Unrolling and New arXiv Agentic Papers

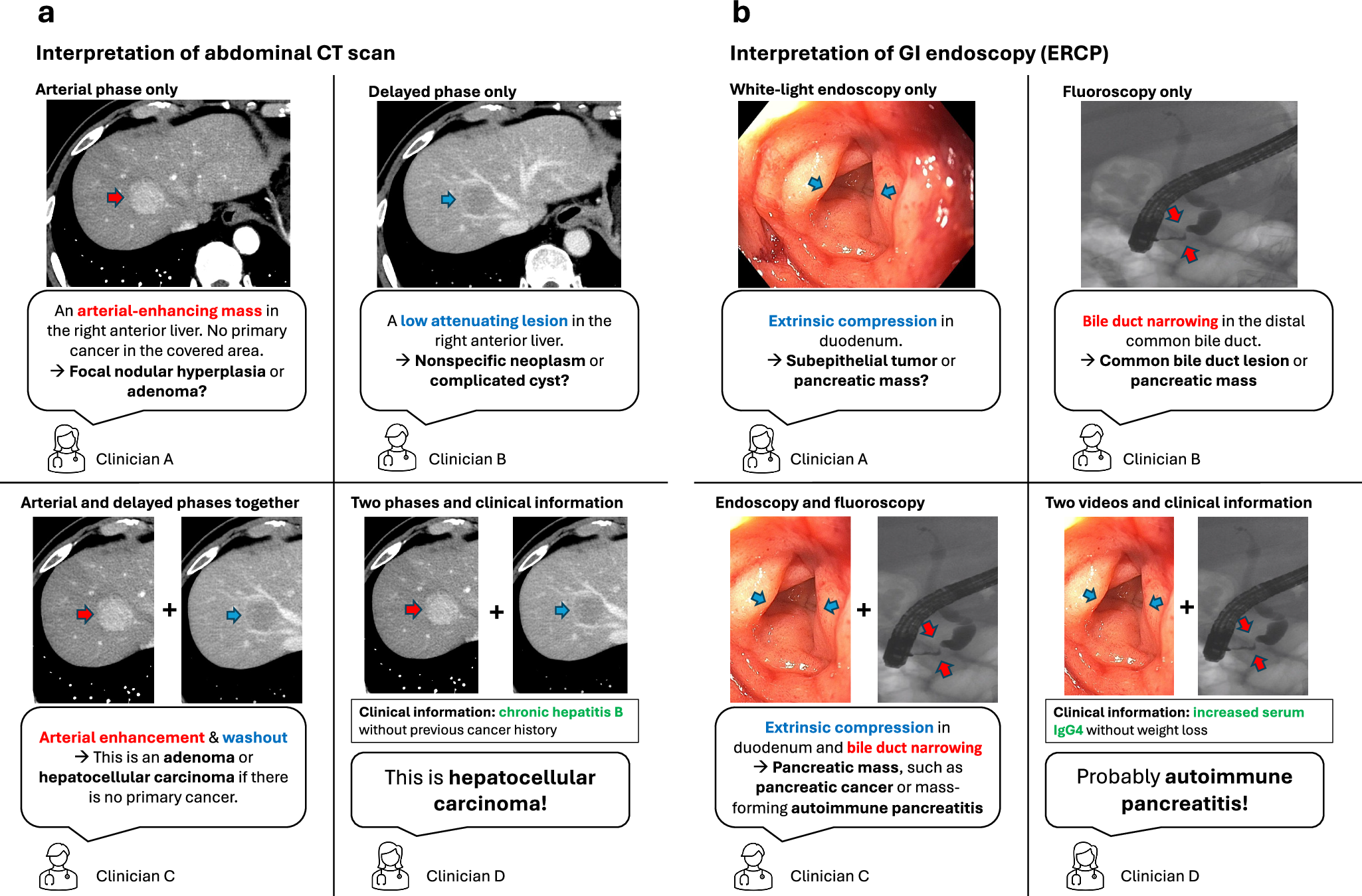

A unified multimodal “Omni” model architecture featuring Context Unrolling has emerged, enabling native reasoning across text, images, videos, 3D geometry, and hidden representations before generating outputs. This approach delivers strong results on generation and understanding benchmarks while supporting in-context creation across modalities.

Multimodal generative AI for interpreting 3D medical images and videos | npj Digital Medicine

Fresh arXiv submissions from the past 24 hours reinforce this momentum: “Tool Attention Is All You Need” introduces dynamic tool gating to eliminate overhead in scalable agentic workflows; “Learning to Communicate” optimizes end-to-end multi-agent language systems; and HiCrew brings hierarchical multi-agent collaboration to long-form video understanding. Additional papers on GeoMind (agentic lithology classification) and BioMiner (multimodal protein-ligand mining) highlight targeted frontier applications in science and reasoning.

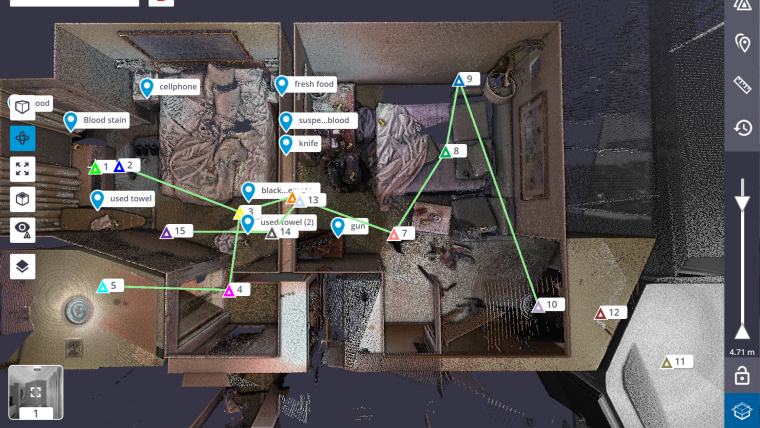

Mamba-based 3D architectures achieving 120 fps reconstruction with 40% less memory further expand real-time capabilities for AR/VR and robotics.

How 3D scanning rebuilds crime scenes for courtrooms | GIM International

These research breakthroughs exemplify the rapid iteration in AI frontier developments 2026, blending open-source accessibility with high-impact technical innovations.

Source: arXiv recent listings (April 2026 submissions) and researcher posts on X from April 24, 2026.

The images used in this article are sourced from publicly available channels on the internet. They are used solely for the purposes of news commentary, visual illustration, and explanatory reference, and do not constitute commercial use. The author of this article does not own the copyright to these images and makes no claim to any rights over them. If any copyright issues arise regarding these images, please contact the article’s author, and we will promptly address the matter or remove the relevant content.