The past 24 hours have delivered a surge in frontier AI models, agentic breakthroughs, and open-source tooling that’s reshaping how creators and researchers build with AI. From reasoning-powered image generation landing natively in ComfyUI to massive new foundation models and memory frameworks for autonomous agents, today’s cutting-edge AI news highlights the accelerating pace of multimodal systems, video/image generation frontiers, and ComfyUI news that enthusiasts have been waiting for. These AI advancements 2026 are not incremental—they’re unlocking new workflows for consistent generation, agentic reasoning, and local high-performance inference.

OpenAI Drops ChatGPT Images 2.0: Reasoning-First Image Generation Goes Mainstream

OpenAI has officially launched ChatGPT Images 2.0, a major leap in multimodal image generation that emphasizes planning, self-checking, and iteration rather than single-shot outputs. The new model delivers sharper 2K resolution, dramatically improved text rendering, multilingual support, and cleaner UI mockups, maps, and infographics. Early reports highlight its ability to “reason before it generates,” producing outputs with coherent composition and fewer artifacts.

This release aligns perfectly with the latest ComfyUI news. Via Partner Nodes, GPT Image 2.0 is now fully integrated into ComfyUI workflows, allowing local users to tap into its reasoning capabilities without relying solely on the ChatGPT web interface. Users are already sharing node graphs that chain GPT Image 2.0 with existing tools for storyboards and product variants.

Source: OpenAI rollout via ComfyUI integration and official announcements (April 22-23, 2026).

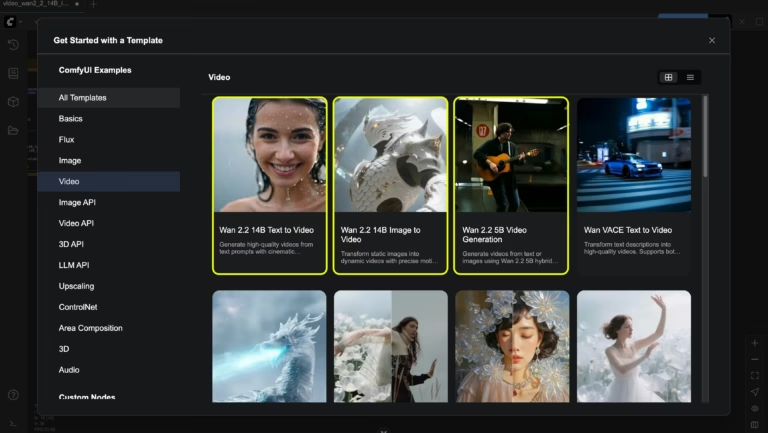

ComfyUI Latest Updates: Native GPT Image 2.0 Support, Consistent Multi-Image Generation, and RTX Video Acceleration

ComfyUI continues its dominance as the go-to node-based interface for frontier image and video generation. In the past 24 hours, the official ComfyUI account announced full support for OpenAI GPT Image 2.0 through Partner Nodes, complete with v0.19.4 updates that enable one-prompt generation of up to 8 consistent images with character and object continuity. Aspect ratios now span 3:1 to 1:3 with zero seed gymnastics required.

NVIDIA’s ongoing RTX optimizations have also landed fresh accelerations: up to 3x performance and 60% VRAM reduction for video and image workflows using PyTorch-CUDA, NVFP4, and NVFP8 precision. Models like Lightricks LTX-2 and Black Forest Labs FLUX.2 variants now run natively in ComfyUI with 4K AI video generation support on RTX GPUs. Creators report seamless local workflows for video super-resolution and complex multimodal pipelines.

These ComfyUI latest updates make high-end frontier AI models accessible on consumer hardware, cementing the tool’s role in open-source innovations.

ComfyUI node graph demonstrating GPT Image 2.0 integration for planned, iterative generation.

Source: Official ComfyUI X posts and NVIDIA RTX AI Garage updates (April 22-23, 2026).

NVIDIA Releases Llama 3.1 Nemotron Ultra 253B: A New Open Foundation Model Beast

NVIDIA has dropped Llama 3.1 Nemotron Ultra 253B, a massive open-weight foundation model optimized via Neural Architecture Search and designed for efficient 8x H100 node deployment. Benchmarks show it leading in scientific reasoning (GPQA Diamond: 76.0%), complex math (MATH 500: 97.3%), and coding (HumanEval: 88.4%). Dynamic reasoning toggles and a 128K context window make it ideal for agentic AI and long-form multimodal tasks.

The model is hosted on NVIDIA’s DGX Cloud with an OpenAI-compatible API, giving developers free access to over 80 top models including DeepSeek, MiniMax, and GLM variants. This release strengthens the open-source ecosystem and directly fuels ComfyUI news by providing high-performance backends for local inference.

Source: NVIDIA official model documentation and community announcements (April 22, 2026).

Google Cloud AI Introduces ReasoningBank: Game-Changing Memory for Agentic AI

In a significant agentic AI breakthrough, Google Cloud AI Research (with collaborators from UIUC and Yale) unveiled ReasoningBank—a memory framework that distills reasoning strategies from both agent successes and failures into reusable, generalizable patterns. Unlike traditional logs that simply record actions, ReasoningBank extracts “why” something worked, enabling agents to generalize across tasks without retraining.

This frontier development addresses a core limitation in current agentic systems: the inability to learn from experience at a strategic level. Early tests show improved performance on complex, multi-step workflows, positioning it as essential infrastructure for the next wave of autonomous AI agents.

Source: Google Cloud AI Research announcement via MarkTechPost (April 23, 2026).

Broader Open-Source Momentum: Hugging Face ml-intern and Agent Sandbox Innovations

The open-source community is keeping pace. Hugging Face released ml-intern, an open-source ML research agent granting early users $1K in GPU credits and Anthropic access. Meanwhile, Tencent Cloud open-sourced Cube Sandbox, a RustVMM/KVM-based runtime for AI agents offering sub-60ms cold starts and E2B SDK compatibility—perfect for secure, production-grade agentic deployments.

These tools complement ComfyUI’s node-based extensibility, allowing developers to chain image/video generation with full agentic reasoning loops.

Why These AI Advancements 2026 Matter for Frontier Enthusiasts

Today’s releases underscore three converging trends in cutting-edge AI news: (1) reasoning-native multimodal systems that plan before they create, (2) hardware-optimized open foundation models that democratize access, and (3) memory and workflow frameworks that push agentic AI from prototype to practical. Whether you’re running local ComfyUI workflows for 4K video generation or building multi-agent systems with Nemotron backends, the frontier is moving faster than ever.

ComfyUI latest updates in particular stand out as the practical bridge between closed frontier models and open innovation—turning yesterday’s web-only features into today’s local, extensible pipelines.

Stay tuned for more daily cutting-edge AI updates.