The AI landscape continues to evolve rapidly, with several significant developments emerging in the past 24 hours and recent days. From powerful new flagship models to strategic shifts by leading companies, here’s a clear, factual roundup of the key happenings.

Anthropic released Claude Opus 4.7 on April 16, marking a notable upgrade in complex reasoning and agentic workflows. The model shows measurable improvements on benchmarks like SWE-bench and GPQA, positioning it as a strong option for coding, long-context tasks, and professional applications. Alongside this, Anthropic continues to keep its most advanced system, Claude Mythos Preview, restricted to select partners due to its capabilities in sensitive areas such as cybersecurity simulations.

Google has been active as well, with the recent open-sourcing of Gemma 4 under Apache 2.0 and the launch of a native Gemini app for Mac. Gemini 3.1 variants, including the Pro and Flash-Lite editions, emphasize multimodal reasoning and efficiency, offering faster response times while maintaining strong performance across reasoning tasks.

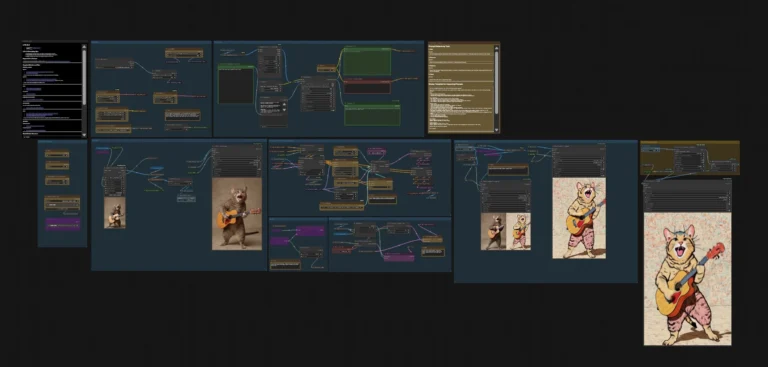

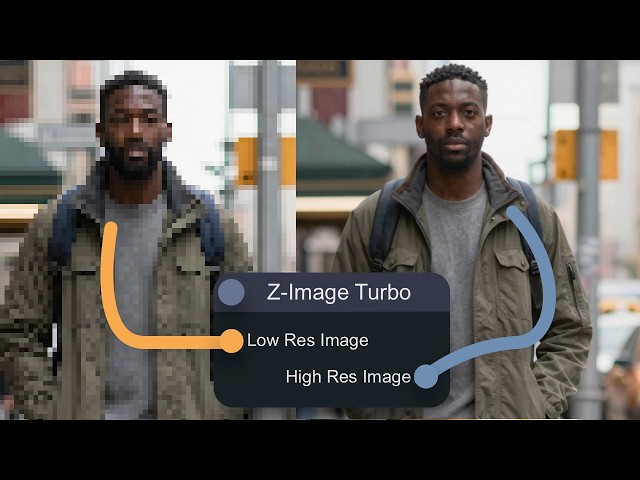

On the open-source and local model front, discussions highlight Flux.2 Klein as a popular choice for realistic image generation, often paired with other models like Qwen or Chroma for specialized workflows. Community feedback on platforms like Reddit points to Flux.2 Klein 9B performing well for photorealistic outputs and image editing, while smaller efficient models such as Gemma 4 and GLM-5.1 gain traction for local deployments.

OpenAI is reportedly shifting focus toward enterprise platforms, with internal memos outlining plans for deeper integration, agent systems, and full-stack solutions. Business customers now represent a growing portion of revenue, reflecting a broader industry trend toward reliable, scalable AI deployments rather than purely consumer-facing features.

Meta continues to push boundaries with releases like Llama 4 variants and Muse Spark, while the overall ecosystem sees increased emphasis on cost efficiency, larger context windows, and practical workflow integration. Storage optimization techniques, such as using lightweight LoRA adapters instead of massive full checkpoints, are also gaining attention for reducing resource bloat in production environments.

These updates underscore a maturing AI industry where performance gains are balanced with accessibility, specialization, and responsible deployment. Whether for creative work, coding, or enterprise applications, the past day’s developments offer new tools and considerations for users and developers alike.

Stay tuned for more daily AI updates.