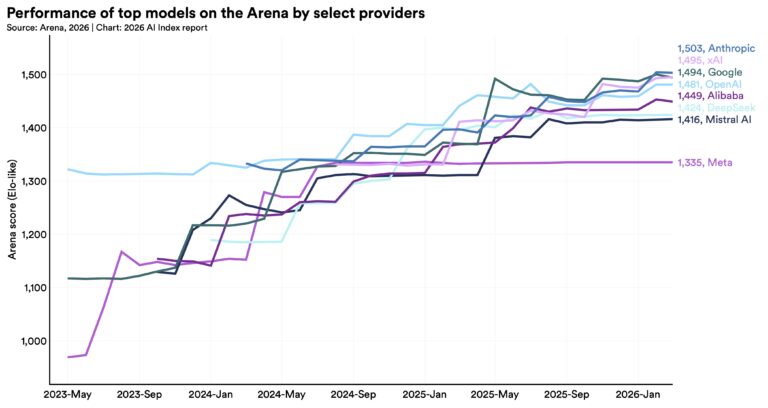

In the fast-moving world of artificial intelligence, the past 24 hours delivered several noteworthy developments across hardware innovation, model roadmaps, and practical tooling. From major chip design collaborations to updates on frontier model ambitions, these stories highlight how the AI industry continues to push boundaries in both infrastructure and application layers. Here’s a clear, factual roundup of the top AI news items making waves today.

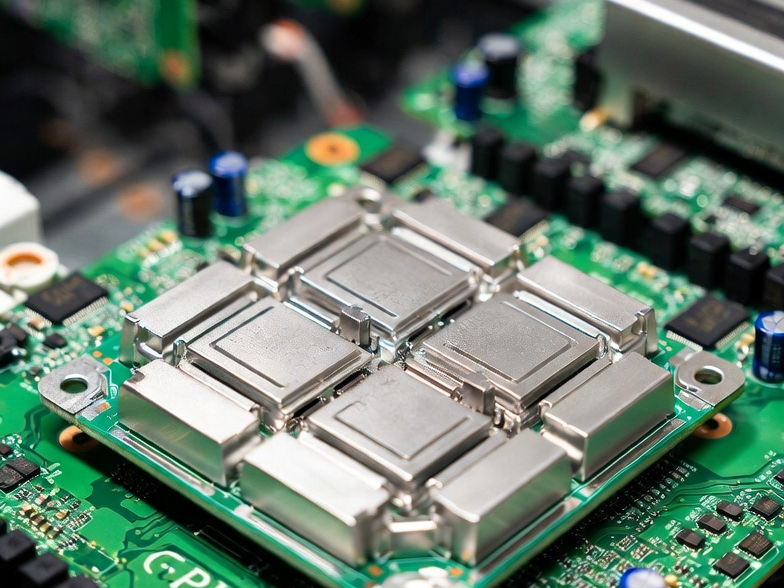

First, Google is reportedly in active discussions with Marvell Technology to co-develop two specialized AI chips aimed at enhancing TPU performance and overall inference efficiency. According to multiple reports circulating on April 19, 2026, one chip is a dedicated Memory Processing Unit (MPU) designed to complement existing Tensor Processing Units, while the second focuses on next-generation TPU architecture optimized specifically for running large AI models more effectively. This partnership could reshape AI hardware supply chains by addressing key bottlenecks in memory handling and inference speed for large-scale deployments. Industry observers note that such custom silicon collaborations are becoming increasingly critical as companies scale AI workloads beyond what general-purpose GPUs can handle efficiently. For context, Google’s TPUs have long been a cornerstone of its cloud AI services, and integrating Marvell’s expertise in custom ASICs could accelerate improvements in energy efficiency and cost-effectiveness for data centers worldwide. This move aligns with broader trends in AI hardware where specialized chips are essential to keep pace with growing model sizes and real-time demands.

Second, xAI and Elon Musk provided fresh insights into the Grok 5 development timeline, reinforcing expectations around its potential as a step toward AGI-level capabilities. On April 19, discussions intensified around Musk’s recent statements estimating a 10% and rising chance that Grok 5 could achieve something indistinguishable from artificial general intelligence. While Grok 5 itself remains in training on the Colossus supercluster with no public release yet, the roadmap points to intermediate releases like Grok 4.4 (targeting around 1T parameters in early May) and Grok 4.5 (1.5T parameters later in May) as precursors. Public beta for Grok 5 is widely anticipated in Q2 2026, with full API access possibly following in Q3. This update comes amid rapid iteration on the Grok series, including the recent Grok 4.3 beta rollout in April, which emphasized enhanced reasoning, multimodal processing, and reduced hallucinations. The focus on scientific discovery and understanding the universe remains central to xAI’s mission, making these timeline details particularly relevant for developers and enterprises tracking frontier model progress in 2026.

Third, Anthropic continues to build on its recent Claude Opus 4.7 release with the introduction of Claude Design, a new research preview tool powered by the upgraded model. Launched in mid-April and gaining traction in ongoing conversations, Claude Design enables users to generate polished prototypes, slides, one-pagers, and visual assets through natural language collaboration with Claude. Opus 4.7 itself brings notable improvements in advanced software engineering, complex multi-step reasoning, vision tasks, and long-horizon agentic workflows, with a 1M token context window and adaptive thinking capabilities. Pricing remains consistent with prior versions at $5 per million input tokens and $25 per million output tokens, available across Claude products, the API, and major cloud platforms. This tooling expansion demonstrates Anthropic’s emphasis on practical, production-ready applications beyond raw model performance, helping teams bridge the gap between ideation and deployable outputs in design and knowledge work.

Additionally, the open-source AI landscape saw continued momentum with updated top local models lists highlighting strong performers like Qwen 3.5, Gemma 4, GLM-5 variants, and DeepSeek V3.2 for various use cases. These models are increasingly recommended for local deployment due to their balance of capability and efficiency on consumer hardware. While not brand-new releases in the last day, their prominence in April 2026 roundups underscores the accelerating shift toward accessible, high-performing open-weight options that developers can run offline or fine-tune without massive cloud costs.

Finally, OpenAI expanded its Academy initiative with global events aimed at building practical AI skills, reaching millions through live sessions, resources, and community building. Events in locations from Warsaw to Texas highlight the growing emphasis on education and adoption as AI tools mature.

These developments illustrate the multifaceted progress in AI: hardware foundations getting stronger, roadmaps clarifying paths to advanced intelligence, and tools making complex models more usable. The interplay between chip-level innovations and model capabilities will likely define competitive edges in the coming months.

Stay tuned for more daily AI updates.